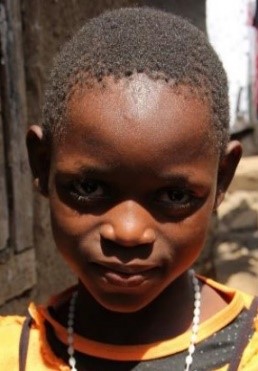

I Hear You is a project that SINTEF has carried out in collaboration with NTNU and two local partners in Tanzania. A game-based hearing test has been developed that can be used for hearing screening of children. Standard tablets and headphones can be used to perform the test, making hearing testing more accessible. The aim is to create a community-based hearing service that can detect children with impaired hearing and contribute to giving them a better education.

The continuation of this project, which we call I Hear You 2, will now look at the effect of various interventions that can contribute to improving the learning situation for children. This project was nominated for the EARTO (European Association of Research and Technology Organisations) Innovation Award for 2022 in the Impact Expected category. There we got a bronze medal and EARTO has made a video that presents the project in a nice way.

The projects have been and are funded by the Research Council of Norway through the programs Visjon2030 (A new Hearing Care Service in Tanzania, I Hear You, NFR 267527) and Norglobal2 (Inclusive Hearing Care for School Children in Tanzania, I Hear You 2, NFR 316345).